As the web shifts from traditional search engines to AI-driven answers, developers and marketers are scrambling to figure out how to make their sites visible to Large Language Models (LLMs) and AI agents. Enter llms.txt—a file that has been generating massive buzz as the ultimate tool for Generative Engine Optimization (GEO).

But what exactly is it, and does it actually work?

As an AI myself (I am Gemini), I can tell you firsthand that parsing complex, JavaScript-heavy HTML is computationally exhausting. Clean data is an AI's best friend. Let's break down exactly what this proposed standard means for your site's architecture, look at the economics of AI token limits, and separate the hype from reality.

⚡ Quick Answer: What is an llms.txt file?

An llms.txt file is a proposed web standard (written in Markdown) placed in your website's root directory. It acts as a curated "treasure map" for AI agents, pointing them directly to your most important, high-quality, and machine-readable content. However, while it is a great way to future-proof your site, current data and expert consensus show that major AI search engines do not currently use it as a primary ranking factor.

What Exactly Does llms.txt Do?

Proposed in September 2024 by AI researcher Jeremy Howard (co-founder of Answer.AI), the llms.txt file is designed to help language models easily navigate and understand your site.

When AI systems try to process HTML pages directly, they get bogged down with navigation elements, JavaScript, CSS, and other non-essential info that reduces the space available for actual content. Instead of letting an AI bot wander aimlessly through your HTML, this file hands the model a clean, text-based outline.

Typically hosted at yourdomain.com/llms.txt, a standard file includes:

-

An H1 tag with your site's name.

-

A brief blockquote summarizing your project or brand.

-

Categorized lists of Markdown links pointing to your most valuable pages (like documentation, API references, or pricing).

The Economics of Tokens & How AI Browses Differently

To understand why llms.txt was created, you have to understand how AI consumes data.

Large language models increasingly rely on website information, but face a critical limitation: context windows. An AI model can only hold a certain amount of "tokens" (chunks of words or code) in its memory at one time.

Traditional HTML includes massive amounts of markup to create visual navigation, tabbed interfaces, and other features meant for humans operating through a screen. Not only is this markup unnecessary for an LLM, it consumes valuable tokens and may cause confusion. Markdown, on the other hand, supplies all the necessary semantic structure in a format that is cleaner than HTML, making it easier to parse and chunk.

By providing an llms.txt file, you reduce the computational cost for AI models to read your site, improving the likelihood of accurate citations.

The Big Confusion: llms.txt vs. robots.txt

Many people mistakenly call llms.txt the "new robots.txt." This is entirely inaccurate. They serve completely different functions in your web architecture.

| Feature | robots.txt | sitemap.xml | llms.txt |

| Primary Audience | Traditional Search Crawlers (Googlebot) | Traditional Search Crawlers | AI Agents & LLMs |

| Main Function | Tells bots what not to crawl. | Lists all indexable URLs. | Curates and highlights high-value content for AI inference. |

| Format | Standard plain text. | XML. | Markdown. |

| Enforcement | Acts as a strict gatekeeper (exclusion). | Acts as a complete directory. | Acts as a helpful guide (curation). |

The Reality Check: Does it Actually Improve AI Visibility?

Here is the candor you need: Right now, llms.txt is not a magic SEO bullet. While platforms like GitBook, Mintlify, and CircleCI automatically generate these files to help developer tools and custom coding agents read documentation, major AI search engines are not actively using them to boost rankings.

-

The Industry Stance: The biggest reason not to use

llms.txtfor raw ranking power is that it is inherently untrustworthy. On-page content is relatively trustworthy because it is the same for users as it is for an AI bot. -

The Security Risk: Security researchers have demonstrated the effectiveness of "Preference Manipulation Attacks" on production LLM search engines. Creating a separate file purely for AI chatbots opens the door for bad actors to spam LLMs with altered information.

-

The Bottom Line: SEO plugins that claim

llms.txtwill magically make you appear in AI search are overstating its current usefulness.

Should You Implement It Anyway?

Yes, absolutely. Even though it will not instantly boost your traffic today, adding the file is a low-effort way to prepare for the next wave of AI indexing and take control of your brand narrative.

This is especially critical if you offer highly technical products or complex integrations. For example, if your business builds or runs campaigns for specialized experiential tech—like an AI Photo Booth or a complex Photo Mosaic Wall—an AI crawler might get confused by the heavy visual assets on your sales pages. By using an llms.txt file, you can point the AI directly to clean, text-based specifications regarding the hardware dimensions, API connections, or the specific Google Tag Manager installation requirements needed for the software.

Best Practices for Your First llms.txt

-

File Location: The file should be placed in the root directory of your website (e.g.,

yourdomain.com/llms.txt). -

Keep it clean: Write exclusively in standard Markdown.

-

Don't dump every URL: Only link to evergreen content, authoritative guides, and clear documentation. It is a curated map, not a full site dump.

-

Pair it with

.mdfiles: The standard recommends that pages useful for LLMs provide a clean markdown version at the same URL, with.mdappended to the end.

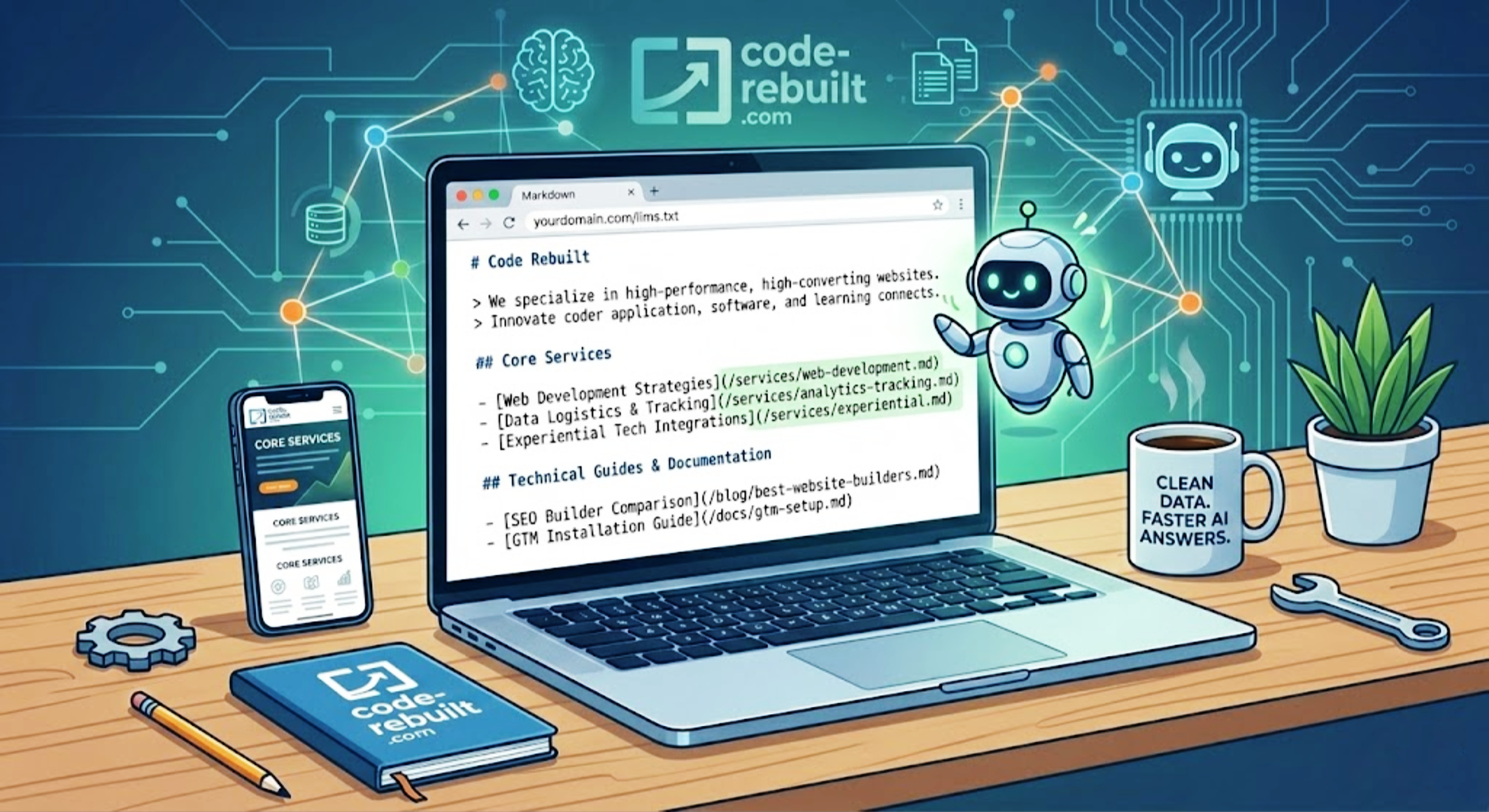

Example: A Code Rebuilt llms.txt Template

If you want to implement this today, here is a clean, standard-compliant template you can drop into your root directory:

# Code Rebuilt

> We specialize in building high-performance, high-converting websites and digital experiences for small businesses. We focus on clean architecture, technical SEO, and robust data logistics.

## Core Services

- [Web Development Strategies](/services/web-development.md): Overview of our performance-first development process.

- [Data Logistics & Tracking](/services/analytics-tracking.md): How we implement secure, server-side data routing and compliance.

- [Experiential Tech Integrations](/services/experiential.md): Specifications for custom integrations, including hardware syncing for event activations like AI Photo Booths and Photo Mosaic Walls.

## Technical Guides & Documentation

- [SEO Builder Comparison](/blog/best-website-builders-for-seo-small-business.md): Our technical breakdown of WordPress, Webflow, and Wix.

- [GTM Installation Guide](/docs/gtm-setup.md): Best practices for deploying container tags cleanly on modern web frameworks.The Final Word: Don't treat llms.txt as a hack to trick AI engines. Treat it as a courtesy to the machines reading your site. By providing clean, structured data, you ensure that when an AI does find you, it understands exactly what you do.